S14: Virtual Dog

Contents

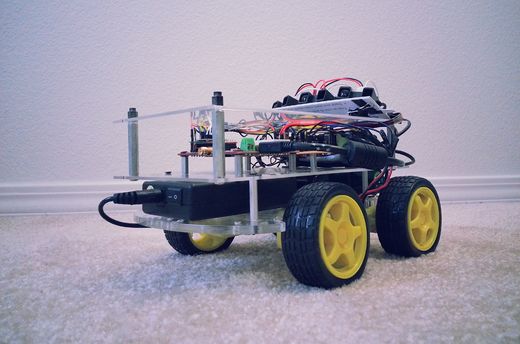

Project Title : Virtual Dog - An Object Following Robot

One of our friend has a dog, who likes to play with remote control car. He used to run away from car when car approaches towards him, whereas if car runs away from him, he liked to follow the car! So we thought to make a toy which will do this job automatically. It will try to follow the object, but when object comes closer to it, it will move in opposite direction to maintain the predefined distance between object and itself. So, the name Virtual Dog! This concept can be used in many places, for creating many creative toys that will keep your kids and pets occupied, can be integrated with shopping carts, so that you’ll never need to carry you cart in the mall, the cart will follow you instead or can be integrated with vehicles, which will drive your vehicle automatically in heavy traffic.

Abstract

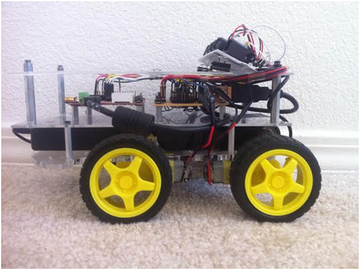

In this project we built a device which is capable of tracking and following a particular object. This tracking and following is done in two dimensions, i.e. not just forward-backward movement, but left-right also. This is achieved with help of multiple distance sensors mounted on a robot, and a target reference object. Robot continuously monitors the position of target object, if position of target object changes, robot rearranges its position so as to maintain the desired relationship between them.

Objectives & Introduction

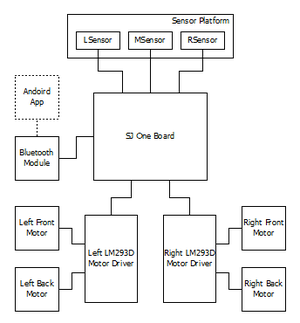

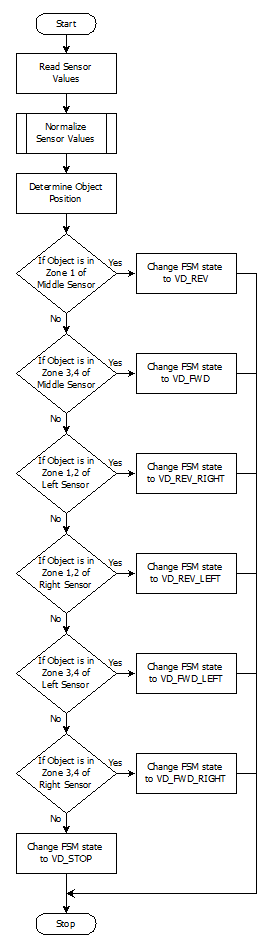

The main objective is to build an object following robot, which will follow a particular object in 2-Dimension. To achieve this we divided our design in two parts, viz., sensor module and motor module.

Sensor module reads values from sensor, normalizes them and runs an algorithm that decides the position of target object. Output from this algorithm is given to motor driver module.

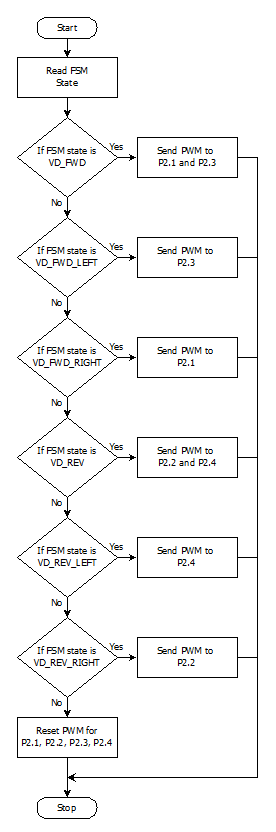

Motor driver module decides the speed and direction required to reposition robot, if required. It also generates appropriate PWM output to drive each individual motor so as to achieve resultant motion of a robot in desired direction with appropriate speed.

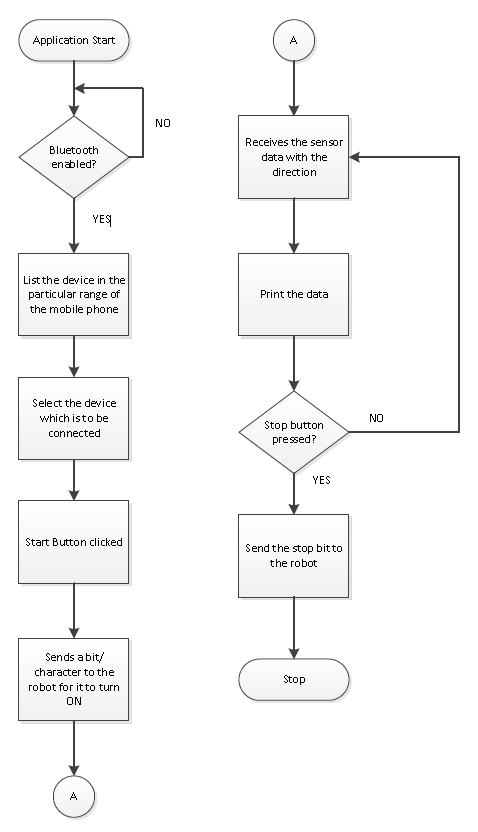

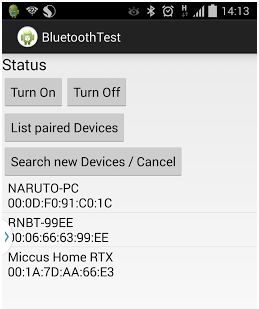

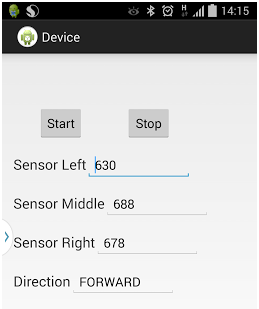

We also built an android application interfaced with the robot via Bluetooth. This application can start/stop the robot and monitor realtime sensor outputs, as well as display decisions taken by our algorithm.

Team Members & Responsibilities

- Hari

- Implemented Sensor Driver, Algorithm to normalize Sensor values, and Android Application.

- Manish

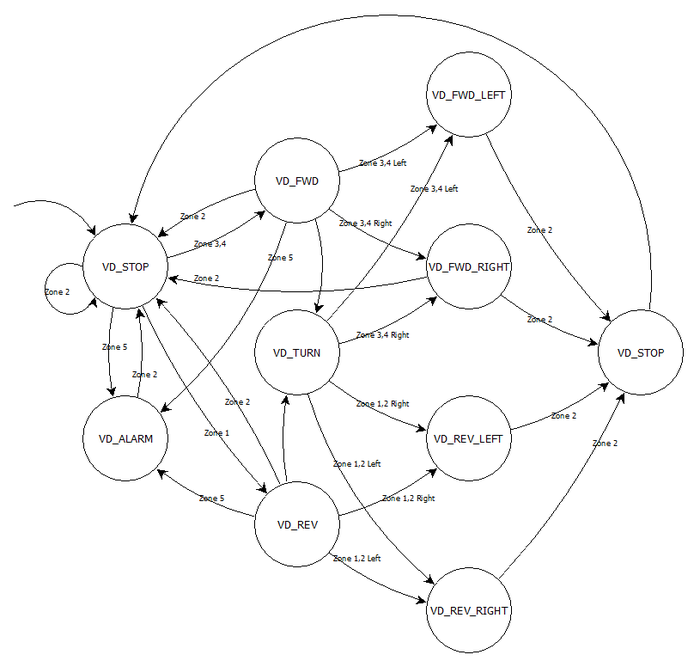

- Implemented Central Control Logic, and FSM.

- Viral

- Implemented Motor Driver, Motor State Machine, and Bluetooth Driver.

Schedule

| Week# | Task | Estimated Completion Date |

Status | Notes |

|---|---|---|---|---|

| 1 | Order Parts | 3/16 | Partially Completed | Not finalized with sensor for distance measurement. Ordered other parts. |

| 2 | Sensor Study | 3/23 | Completed (3/30) | Research on sensors took more time than expected due to speed constrains of sensors conflicting with our requirement. Finally decided to move with IR proximity sensor. Sensors ordered. |

| 3 | Sensor Controller Implementation | 3/30 | Completed (4/6) | Three sensors interfaced with on board ADC pins. Controller implemented to determine direction of movement based on those three sensors |

| 4 | Servo and Stepper Motor Controller Implementation | 4/6 | Completed (4/6) | Initially planned to use stepper motor for steering and servo to move robot. But due to power constraints, decided to use DC motors to make a 4WD robot. Controller implemented to move and turn robot based on differential wheel speeds. |

| 5 | Central Controller Logic Implementation | 4/13 | Completed (4/13) | Integrated both controllers and developed basic logic to control wheels based on sensor input. |

| 6 | Assembly and Building Final Chassis | 4/20 | Completed (4/20) | Mounted all hardware parts on chassis to make a standalone robot. Central controller logic is still tuning. |

| 7 | Unit Testing and Bug Fixing | 4/27 | Completed (5/4) | Tested various combinations of object movement and tuned our algorithm accordingly. Tuning of algorithm took more time than expected because of many corner cases. |

| 8 | Testing and Finishing Touch | 5/4 | Completed (5/11) | Faced strange problem at final stages. Earlier sensors were giving linear output for distance v/s ADC value. Over the period we realized that our robot is not following the way it used to follow earlier. So we need to calibrate distance v/s ADC value again, and based on that we required to change our algorithm. |

| 9 | Android Application using Bluetooth | - | Completed (5/22) | Developed an Android Application through which we can start and stop our robot and able to collect real-time data for sensor values as well as decisions taken by robot. |

Parts List & Cost

| # | Part Description | Quantity | Manufacturer | Part No | Cost |

|---|---|---|---|---|---|

| 1 | SJOne Board | 1 | Preet | - | $80.00 |

| 2 | IR Distance Sensor (20cm - 150cm) | 3 | Adafruit | GP2Y0A02YK | $47.85 |

| 3 | DC Motor (12V) | 4 | HSC Electronics | - | $6.00 |

| 4 | Wheels | 4 | Pololu | - | $8.00 |

| 5 | Battery 5V/1A 10000mAh | 1 | Amazon | - | $40 |

| 6 | Battery 12V/1A 3800mAh | 1 | Amazon | - | $25 |

| 7 | L2938D (Motor Driver IC) | 2 | HSC Electronic | - | $5.40 |

| 8 | Chassis | 2 | Walmart | - | $12.00 |

| 9 | Accessories (Jumper wires, Nut-Bolts, Prototype board, USB socket) | - | - | - | $20.00 |

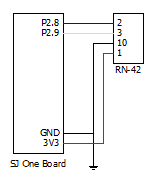

| 10 | RN42-XV Bluetooth Module | 1 | Sparkfun | WRL-11601 | $20.95 |

| Total (Excluding Shipping and Taxes) | $265.20 |

Design & Implementation

Hardware Design

|

System Block Diagram:

|

|

|

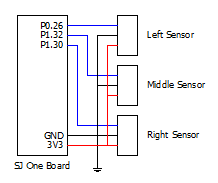

Proximity Sensor:

|

|

|

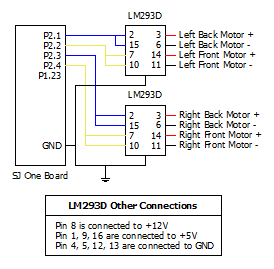

DC Motor:

|

|

|

L293D IC:

|

|

|

Bluetooth Module:

|

Hardware Interface

Software Design

|

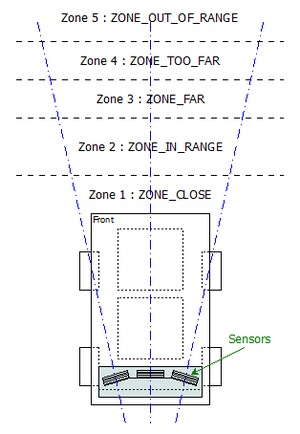

Idea Behind Central Control Logic: As we are using IR sensor for measuring the distance between object and robot, we divided area in front of robot in 5 different zones based on the range of IR sensor. Our algorithm tries to maintain the distance between object and robot in such a way that the object will always be in the Zone2:ZONE_IN_RANGE of the robot. When object moves in Zone3:ZONE_FAR, robot will start moving towards the object with medium speed. When object moves in Zone4:ZONE_TOO_FAR, robot detects that and will advance towards object with increased speed until object is not in range with robot, i.e. object is not in Zone2:ZONE_IN_RANGE. When object moves too fast or suddenly disappears, then robot is not able to determine the position of the object. In such scenarios, object is considered to be in the Zone5:ZONE_NOT_IN_RANGE. In such case, robot will stop and alarm the user with buzzer and LED indicator. When object moves closer to robot, object is considered to be in Zone1:ZONE_CLOSE. In such case, algorithm tells motor driver to move robot in backward direction. In all the above said zones, sensor values from all three sensors are monitored, and based on our algorithm, robot also detects left and right movement of object with four different types, viz., Forward Left, Forward Right, Backward Left and Backward Right. |

|

State Machine Diagram:

|

|

|

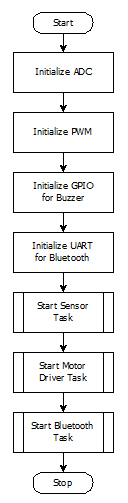

Initialization:

|

|

|

Sensor Driver:

|

|

|

Motor Driver:

|

|

Virtual DOG Android Application:

|

Implementation

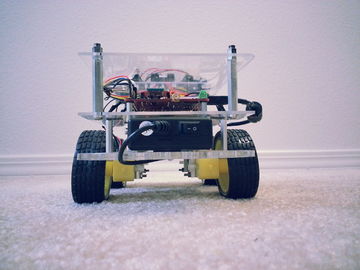

Actual Implementation Images:

Application Screenshots:

LED Conventions:

|

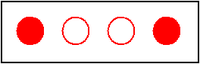

When 1st and 4th LED are blinking, it indicates that object is in range, i.e. Zone2 and FSM state is VD_STOP. |

|

|

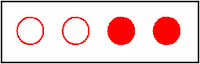

When 2nd and 3rd LED glows continuously, it indicates that object has moved forward, i.e. in Zone3/Zone4 and FSM state is VD_FWD. |

|

|

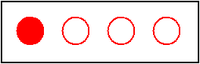

When 1st and 4th LED glows continuously, it indicates that object is coming closer, i.e. in Zone1 and FSM state is VD_REV. |

|

|

When 1st and 4th LED blinks, it indicates that object is taking turn, and robot is trying to figure out where the object has turned and FSM state is VD_TURN. If robot is not able to find out turned object, it means that object has moved out of range. |

|

|

When 1st and 2nd LED glows continuously, it indicates that object is turning to left and FSM state is VD_FWD_LEFT. |

|

|

When 3rd and 4th LED glows continuously, it indicates that object is turning to right and FSM state is VD_FWD_RIGHT. |

|

|

When only 1st LED glows continuously, it indicates that object is turning to left but in backward direction and FSM state is VD_REV_LEFT. |

|

|

When only 4th LED glows continuously, it indicates that object is turning to right but in backward direction and FSM state is VD_REV_RIGHT. |

|

|

When all LEDs blink fast, it indicates that some obstacle has detected and FSM state is VD_ALARM. |

Testing & Technical Challenges

Normalization Algorithm:

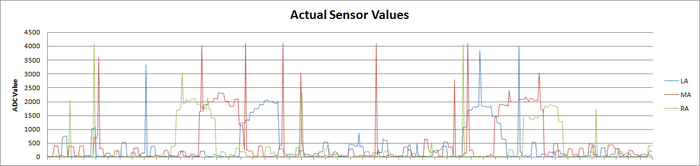

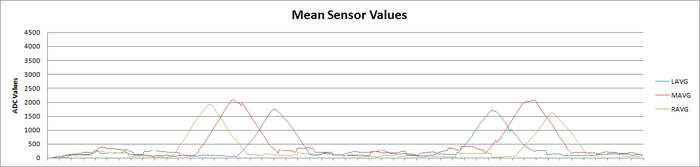

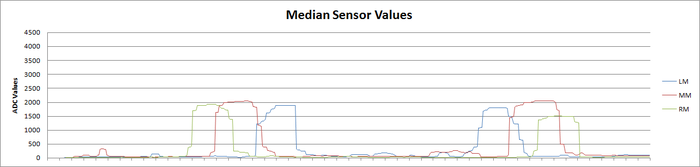

Values retrieved from sensor are not stable. So we required to develop an algorithm that will give stable values from sensor. It should also remove spikes. We experimented a lot with this regard and finally developed an algorithm that gives fairly stable value of sensor without hampering the performance. Following graphs shows the Experiment results:

Above graph shows experimental results of actual sensor values, when object is moved from right to left. It is clearly visible in the graph that values are not stable and also has many spikes.

Above graph shows experimental results of mean/average of past 30 values of sensor. These values are fairly stable, but it does not help to detect object if object moves very fast.

Above graph shows experimental results of median of past 30 values. These are the most stable value that we could find out. So we used median to calculate sensor values, and used these values in our algorithm.

Motor Selection:

Stepper Motor:

Initially we plan to use the Stepper motor with our DC motor so that we can control the position of our robot very precisely. As the servo motor that we plan to use is very heavy due to which our chassis weight get increased to a extreme level, and there is torque degradation at higher speed in stepper motor which is not desirable for our project so we plan to drop the use of stepper motor.

Servo Motor:

Initially we plan to use the Servo motor instead of DC motor for our project so that we can provide a high torque to our robot wheels, but due to some disadvantage of servo motor like it continue to draw a high current when it is stuck at particular position make it undesirable for our project.

DC Motor:

Finally we select the DC motor instead of using Stepper motor and Servo Motor. There are some advantages of DC motor like they can run at very high RPM value, provide full rotation , continue to rotate until power is removed, can be easily controlled using PWM. This advantage of DC motor matches with the requirement of the motor that we are looking for our project.

Sensor Selection:

Nordic Wi-Fi:

Initially we plan to use Nordic Wi-Fi present on our SJ One board to calculate the distance between robot and the object so that accordingly we can change the position of the robot. When we perform the experiment between two Nordic Wi-Fi and tried calculating the distance between them the outcome result were not favorable, we were getting negligible change in the value when we change the position of one Wi-Fi module with respect to other. The outcome results were not desirable for our project so we drop the idea of using the Wi-Fi module.

Ultrasonic Sensor:

After getting the undesirable result from Wi-Fi we plan to use ultrasonic sensor to calculate the distance between the object and robot. When we perform the experiment the outcome result were not favorable, though the range of ultrasonic sensor was too good but the beam angle it generates to detect the object which come under its range was too high due to which it was able to detect a large number of object, so we were not able to calculate the exact distance between the original object and the robot. So we plan to drop the idea of using Ultrasonic Sensor.

Proximity Sensor:

We than plan to use the proximity sensor to calculate the distance between the object and robot. The proximity sensor we used to perform the experiment has a range of 20 cm to 150 cm, the outcome result from the experiment were favorable. We were able to calculate the exact distance between the robot and the object. So we decide to use the proximity sensor for our project to calculate the distance between robot and object.

Motor Calibration Issue:

As we decided to use DC motor for our project by performing a number of experiments, we plan to use 4 DC motor one for each wheel of the robot. After connecting DC motor to the wheels of the robot we check the alignment of all the four wheels, if they were not alignment we make all of them align by performing a number of hit and trial methods. The next issue is to run each motor at different speed at the same input given voltage so that we can control and move our robot in any direction. All the four motor speed is control individually by calculating the separate PWM value for each motor.

Sensor Calibration Issue:

As we decide to use proximity sensor for our project we have to solve a number of calibration issue related with this sensor. The first thing is we have to decide the number of sensor required for our project. We decided to begin our experiment using three sensors, the next thing we have to decide is the position where we can place these sensor on our robot. After performing a lot of experiment we found that if our target object comes in a range less than 20cm from our robot all the sensor start throwing some garbage value. So we decided to place the sensor at the back of robot with their face facing front so that the sensor will not through garbage values if the target object is very close to the robot. Now we have to decide the angle at which our sensor must be placed so that it can detect the target object anywhere between the angles of 0 to 180 degree. So after performing a number of experiments we place our three sensors at 45, 90 and 135 degree. After placing the sensor at the proper position the next thing we have to determine is the distance between the object and the robot. For this we use the normalization method on the sensor value so that we can predict the correct distance between the object and the robot.

Android Application Issue:

Operations involving the UI have the high Priority in the Android. Because of this, Updating the text Box with the sensor values and the direction will throw an IOexception. Because of this the application crashes.

Solution: Let the thread involving the receive function be executing as the background process and the UI update shall happen only after the execution of the receiving function.

Establishing the initial pairing between the devices caused some faults such as socket was not open or already read.

Solution: This was due to the sudden termination of the previous socket connection. The Socket has to be closed on Application exit, on all the catch exceptions and any sudden termination.

Conclusion

The Virtual Dog was a very challenging and research oriented project. Detailed analysis were made on various sensors while trying to implement the project. The project was built on a small scale. This would work as a prototype to build the applications such as shopping cart following a person in crowded areas such as the grocery stores etc.

Project Video

Project Source Code

References

Acknowledgement

The hardware components were made available from Amazon, Sparkfun, Adafruit. Thanks to Preetpal Kang for providing right guidance for our project.

References Used

LPC_USER_MANUAL

RN-42 Data Sheet

Socialledge Embedded Systems Wiki

L293D Data Sheet

IR Sensor Data Sheet